My experience in control robots

from standard methods

Hello, everyone, I'd like to thank the reviewers for attending my presentation, albeit electronically.

I am Gastone Pietro Rosati Papini a post doc researcher at the University of Trento,

and today I will introduce you "Modeling and control of intelligent robots from standard methods to adaptive physical and bio inspired data driven approaches" through my career

Today the use of robotics is coming out of the factories this is opening up new interesting challenges.

Here some examples as:

- Dealing with human or uncertain environment

- Guarantee of safety

- Dealing with failures or partial and uncertain measure

- And others that are listed on the slide

Let's consider the standard approaches, what is the most relevant limitation?

That it is difficult to model everything moreover in presence of uncertainties.

But the behaviour of mechanical systems is well known, it is predictable and we can guarantee its stability and safety.

Whereas on the other hand we have data-driven approaches.

These approaches are very flexible and modular but in robotics it is difficult and dangerous to collect data because it's necessary a machine that interacts with humans or the environment.

And as we know these approaches need of huge amount of data for working.

Moreover the classic network are black-box so it is difficult to guaranty safety.

But in robotics we have a lot of models that we didn't have for image recognition.

So, how can the two approaches be combined?

The idea is to use physical and biological inspiration to structure the neural network and guide the process of learning, and in this presentation I will introduce some preliminary results in this way.

In this slide I just wanted to show my multidisciplinary background particularly suitable for the type of project I want to realize.

The pictures show some projects I accomplished during my carrier, in both bachelor and master university.

The bachelor more suitable for data-driven approach, and master focused on mechanics and control theory.

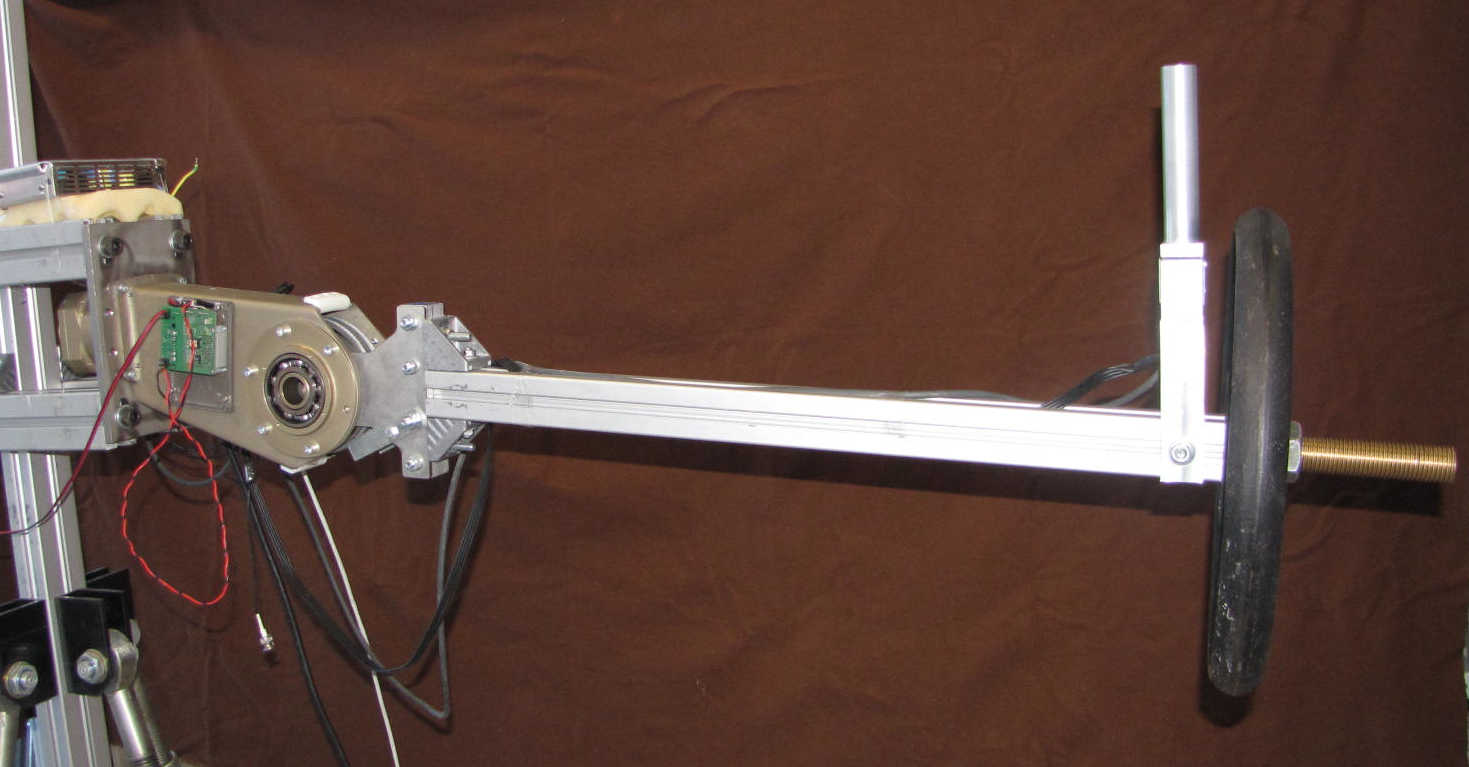

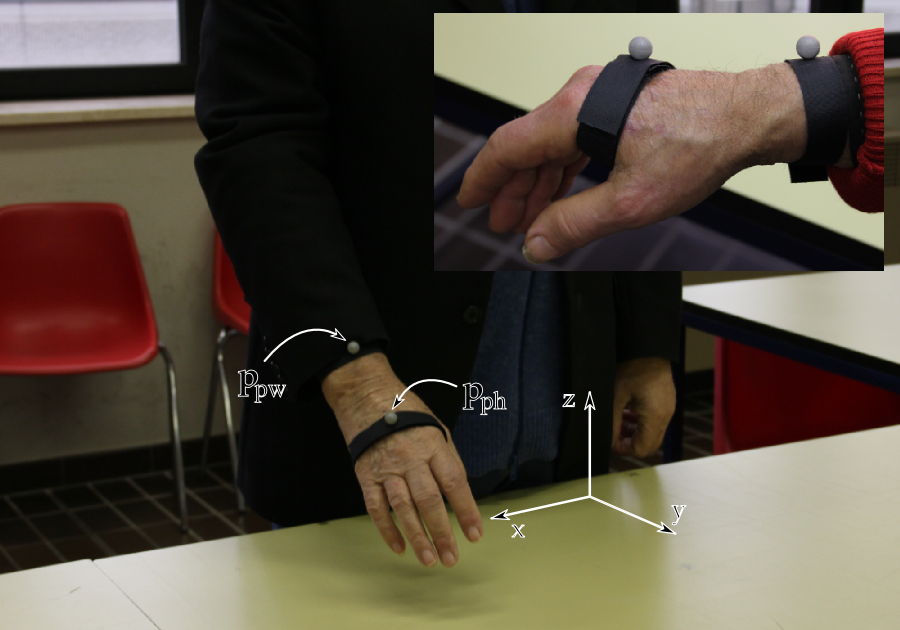

This was my master thesis, the goal was to make a robust controller for the arm of the body extender system a fullbody machine realized at Percro Laboratory of Scuola Superiore Sant'Anna.

The video shows, the system in action, I was driving the arm using an internal force sensor, perceiving the robotic arm as a mass in the vacuum.

Let see the controller.

The objective was to control the upper limb of the Body Extender using a force sensor, between the user and the extender.

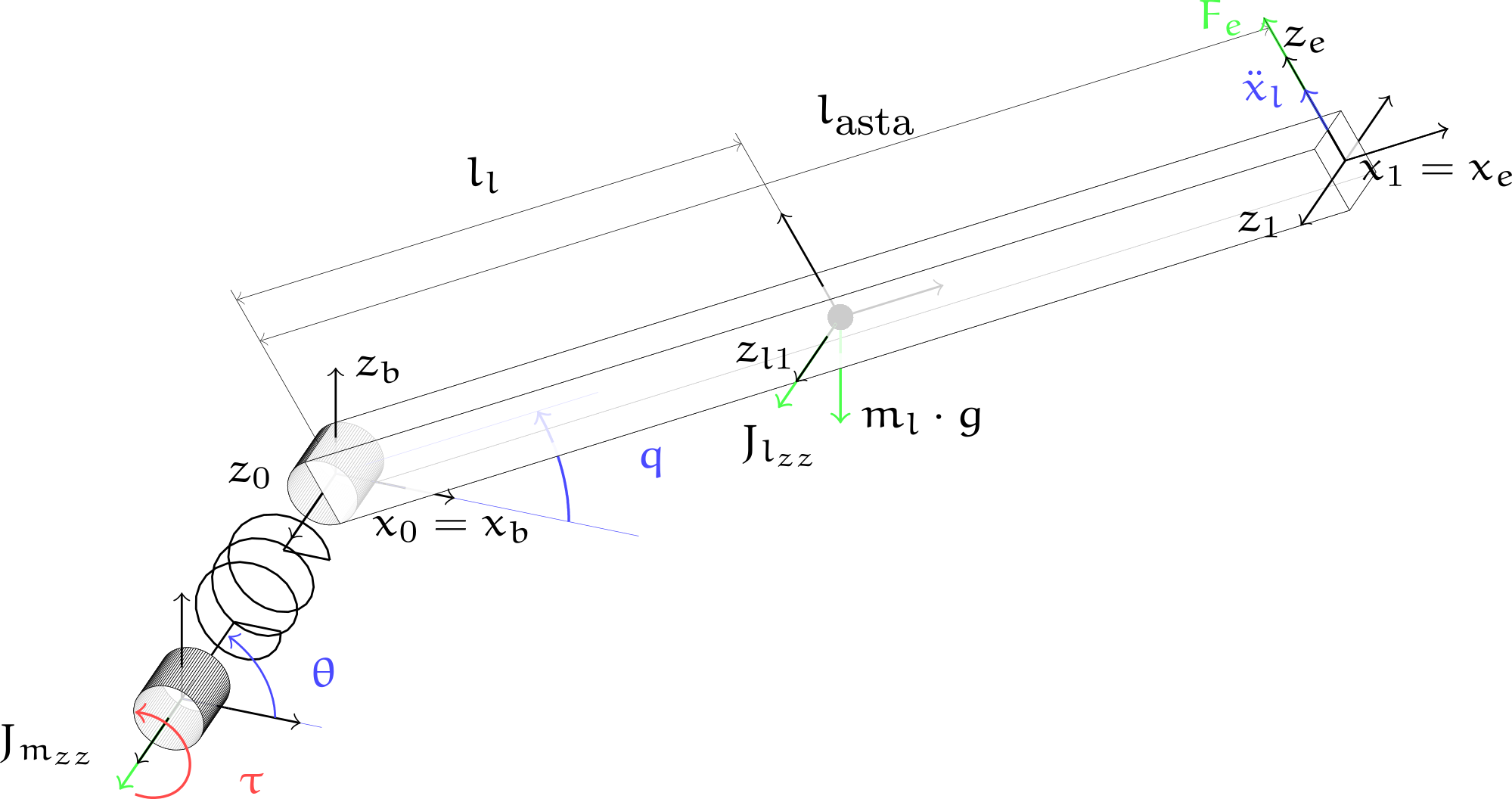

As a preliminary work I focused on a single degree of freedom joint, that I modelled, shown on the left of the slide.

The aim of the control law, it is to make the user perceives a percentage of the weight and inertia of the lifted load, while when the system is unloaded perceive a virtual inertia.

The trajectory is generated by admittance control and followed using a feedback linearization.

Since there was no sensor to the outside, it was necessary to develop an estimator that evaluated the parameters in terms of masses and inertia of the lifted load.

I want to focus on this component.

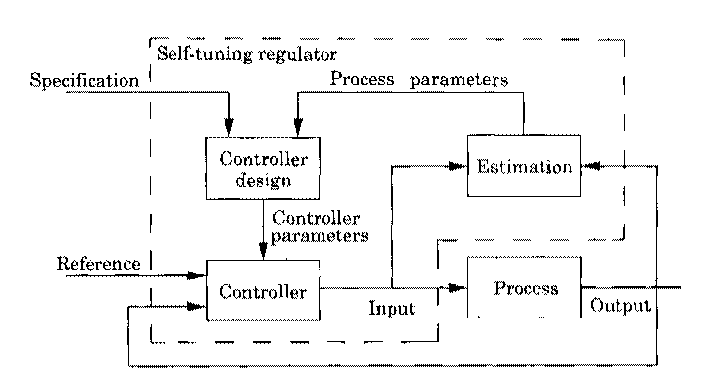

This was an example of adaptive model based control in particular the schema is called explicit self tuning control.

In this schema the regulator is updated through the system parameters.

In my implementation both controller and parameter estimator were model-based.

This is a first example of adaptive behavior that go toward a data-driven approach.

Let's get on with my PhD research

The main activities I want to illustrate are Veritas:

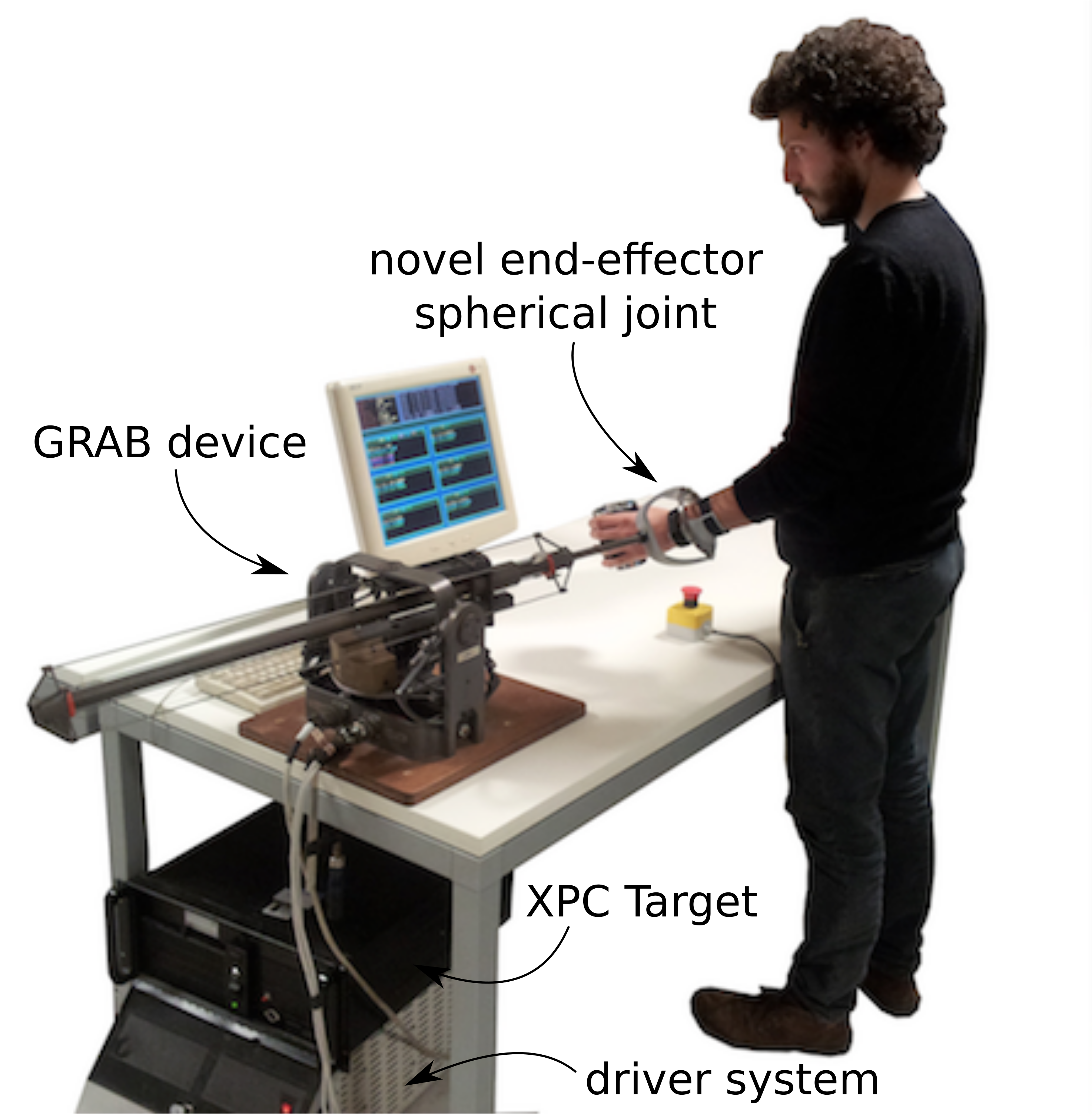

European project focused on empathic design. The empathic design consists in giving, in particular to the designer, tools to feel at first hand the sensations of an ill subject.

The project was focused on the developing of a desktop haptic device to induce a programmable hand-tremor on healthy subjects that is typically observed in people affected by some kind of neurological diseases

The second project I want to show you is Polywec, which I did my phd thesis on.

Polywec is a project focused on how to convert marine wave energy exploiting electro-active polymers as a variable capacitor.

The adaptive component is needed because many different users can use the device.

The adaptive component of the system is realized by a PID regulator based on the amplitude error between the recorded signal and the measured one, Me.

The amplitude is calculated as RMS amplitude with a time window of 1 second, E of s.

The estimated impedance enters into the system compensator and the position estimator to adjust their behavior.

The video shows the use of our device by an Indesit designer.

Thanks to our system he was able to feel the discomfort that people with parkinson's disease have when using the hob and then try to redesign the system.

One result of this process was for example a new stove handle easier to use.

Again, I investigated the use of an adaptive controller because a standard controller was not sufficient.

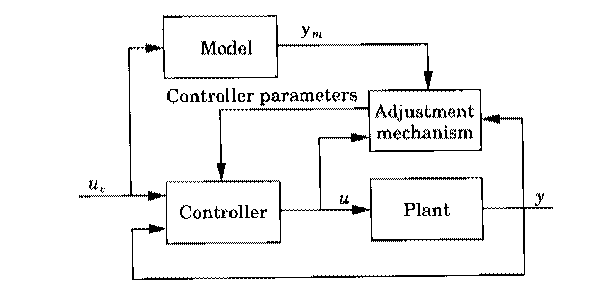

The schema is called model reference adaptive system.

The adjustment mechanism set the controller parameters in such a way to reduce error between reference signal and measured one.

Here the controller is model based, whereas the estimator is not based on physical model, because it is just a PID regulator.

Let's switch to Polywec. Polywec was a long project in which I made contributions in various forms.

I studied the energy production of Poly-OWC, realizing compiled simulink schema.

I tested different control schemas and harvesting cycles using a Hardware in the loop system.

I partecipated to many different test campaing and I developed a video analisys software for energy harvesting evaluation.

I synthesized a real time controller that maximize the energy extration from the poly-OWC using the results obtained from an optimization procedure.

On this last topic I wrote my PHD thesis, and I want to show you in detail.

In order to devèlop an optimization procedure to determine which is the best electric field profile to apply on the electroactive polymer for maximizing the energy produced by the PolyOWC system:

I modeled the oscillating water column system.

I modeled the inflatable circular diaphragm dielectric elastomer generator that is on the top of the OWC chamber, an it inflates when the level of water inside the chamber increase.

The model is based on the force tip equilibrium between the differential pressure and the stresses.

The model of the membrane is coupled with adiabatic transformation to generate the constrains for the optimization procedure.

The two curves fmin and fmax represents the force that the mambrain can generete depending on the inner water level and electric field applied on it.

The optimization procedure starts from the definition of the objective function:

that is to maximize the extracted energy within a period.

The energy extrated is written as the product between the fpto (that is the force applied from the membrane to the water) and the velocity of the inner witer level nu.

To perform the optimization I need to write the objective function using only one variable.

Starting from the cummin's equation that represents the oscillating water column dynamics, I writed the state space, I discretized and determined the evolution within a period of the inner water level as function of the fpto and

fe.

where fe is the exitation force.

The constrints are defined by the operation limits fmin and fmax of the membrane as exaplain before.

So I can perform the optimization procudure.

An example of the result is shown here.

The two pictures show the optimal electric field profile for two different sea states, but this behaviour is repitable for all sea states.

With yellow is represented the electric field applied on the membrane, that is our control variable, while in black the elevation of the membrane tip.

The charging is realized when the membrane is most deformed, but the instant of charging depends to the frequency and amplitude of incoming wave.

This behaviour has the following explanation.

Because the electric field decrese the stiffness of the mambrane, more time it is kept activated less it is stiff.

So the membrane is kept activated for more time when the period of the incoming wave is longer and less when is shorter.

As explained before, given the repeatability of the optimal charging cycles it was possible to synthesize a heuristic that could work in real-time.

This heuristic consists in charging the membrain when the capacitance close to maximum and discharge when is minimum.

This heuristic uses a lookup table to define the best charging instant using a threshold on the derivative of the pressure based on incoming wave parameters.

In this slide is shown the system in actions.

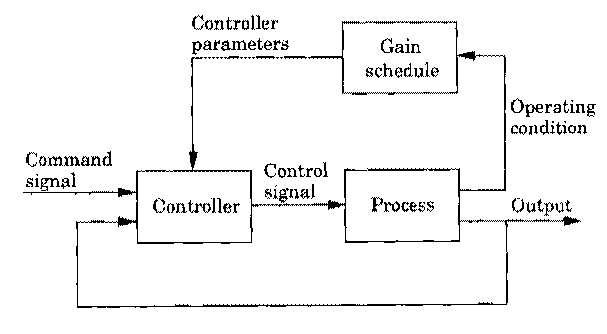

Also in this case I used an adaptive control schema called gain scheduling controller.

Referring to the picture in our case the controller is derived from optimal control solutions and gain schedule, is based on the incoming wave parameters that are the operating condition.

Now I present the first preliminary results obtained during my PostDoc at the University of Trento, focusing on the objective of the presentation.

What have we seen so far?

Applications of stadard methods from adaptive controller to optimal control.

But can we be more flexible and adaptive? The answer is Yes.

Mixing the flexibility of the data driven methods.

Obtaining neural network with physical structure and reinforcement learning on high level behaviors.

So I want to present the first preliminary results obtained in the Dreams4Cars project.

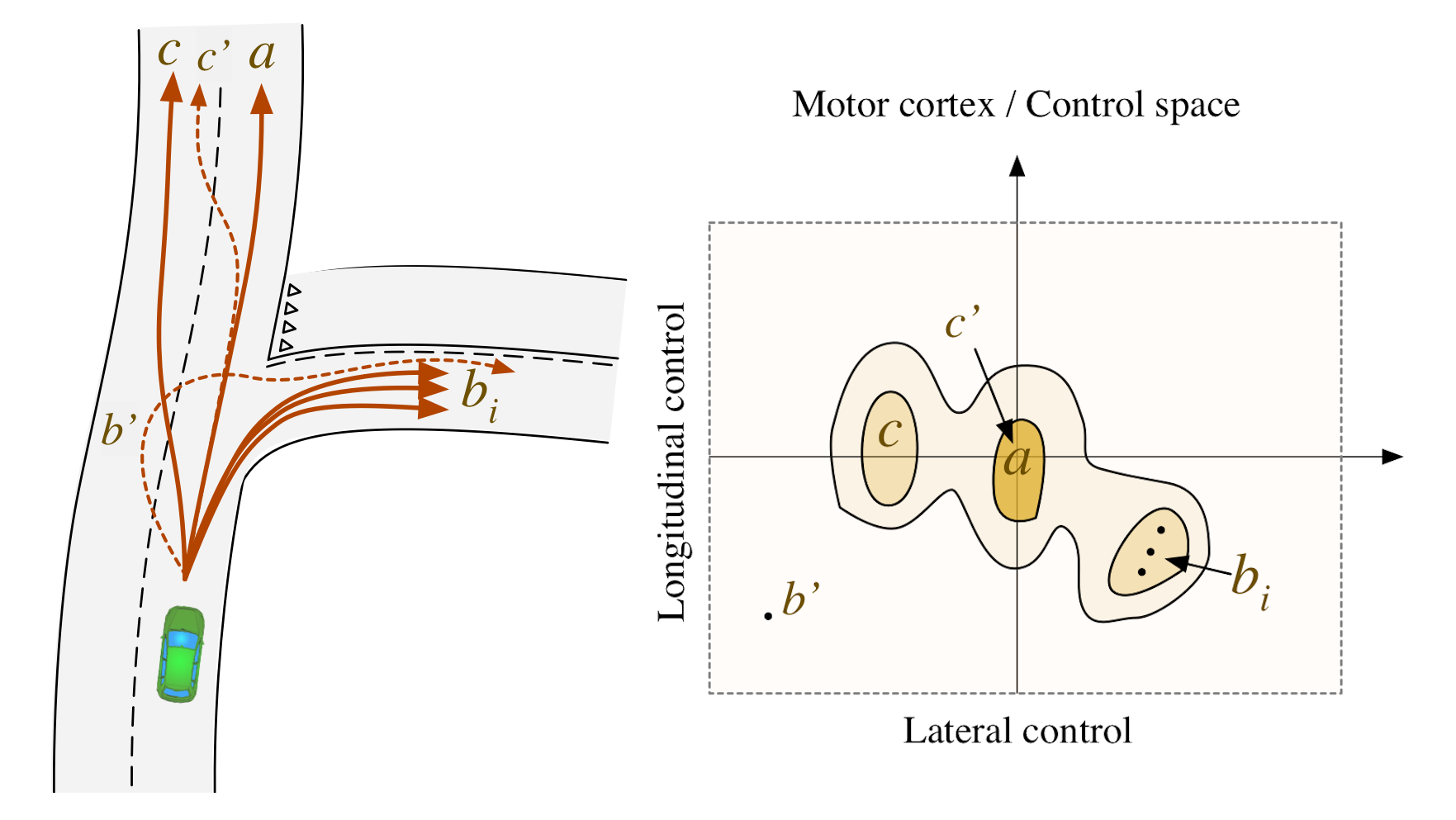

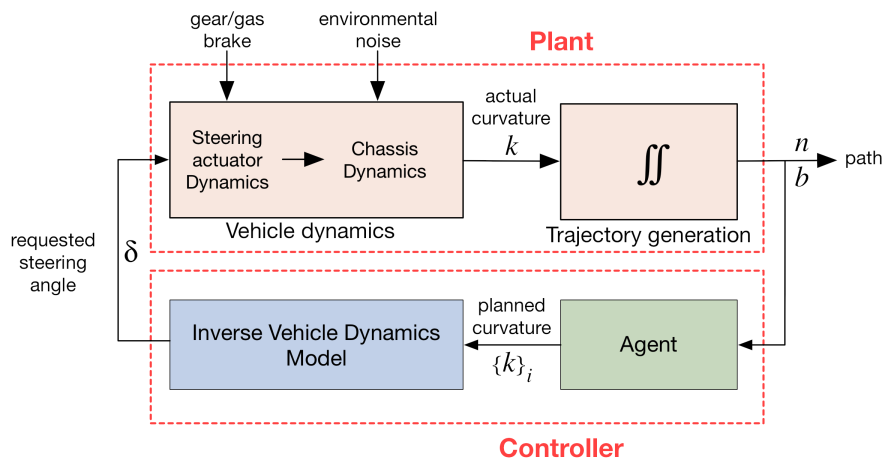

Dreams4Cars is a robotics project that sees the development of a bio-inspired autonomous agent. The cognitive architecture is applied to autonomous driving.

- The bio-inspired agent uses the motor cortex concept that is a way to represent the control space.

- Each point in the motor cortex is an action encodes a minimum jerk trajectory (red arrow in the brain).

- The points are biased to steer the system (yellow arrow) for example to implement traffic rules, then

- The best trajectory is choosen using action selection (green arrow)

- From best trajectory we use a inverse model, instead of the classic MPC, to get the steering wheel and accelerator controls for vehicle (blue and purple arrow)

In this framework I participated in several works that cover different components of the architecture shown. In particular:

- Stability and robustness analisys of vehicle lateral control based on dynamics quasi-cancellation. A work aimed at analyzing the stability of the control loop when the model is not exactly canceled by the inverse control.

- Robust way to perform action selection within the control space.

- Flexible and modular approach for modelling longitudinal vehicle dynamics, that is the first topic that I want to exaplain in details

- and how to deal with uncertain situation via reinforcement lerning, that is the second

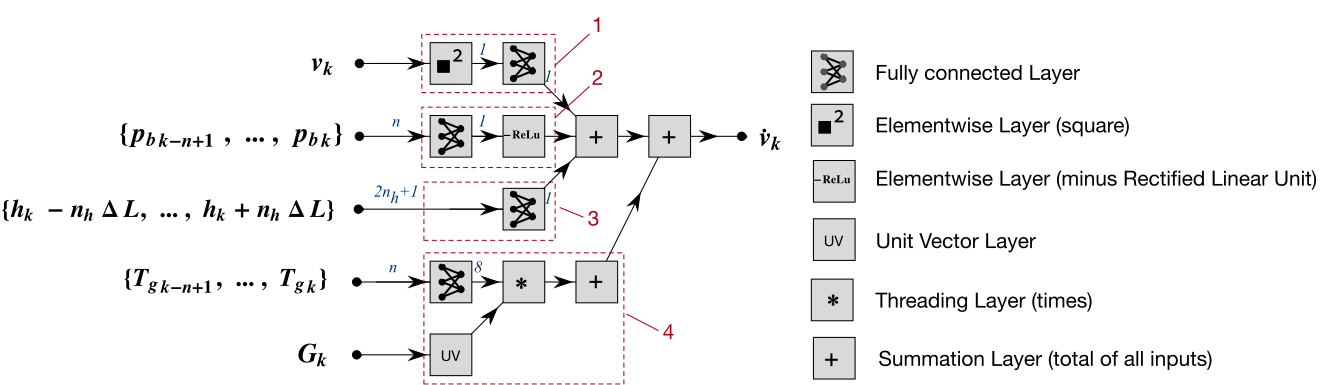

In this work we started from the assumption that the vehicle is a causal system and the forces acting on it can be considered additive.

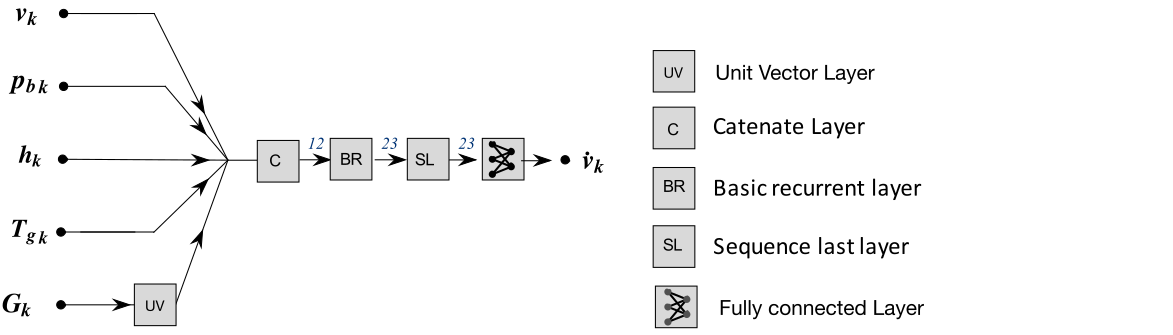

In the picture is depicted the neural network. Here the structure the novel element. Each branch of the network represent a force contribute:

- The air drag in blue.

- The slope of the road in white and black.

- The engine with the relative grear in green.

- The brake in red.

- The output of the network is the predicted accelleration in yellow.

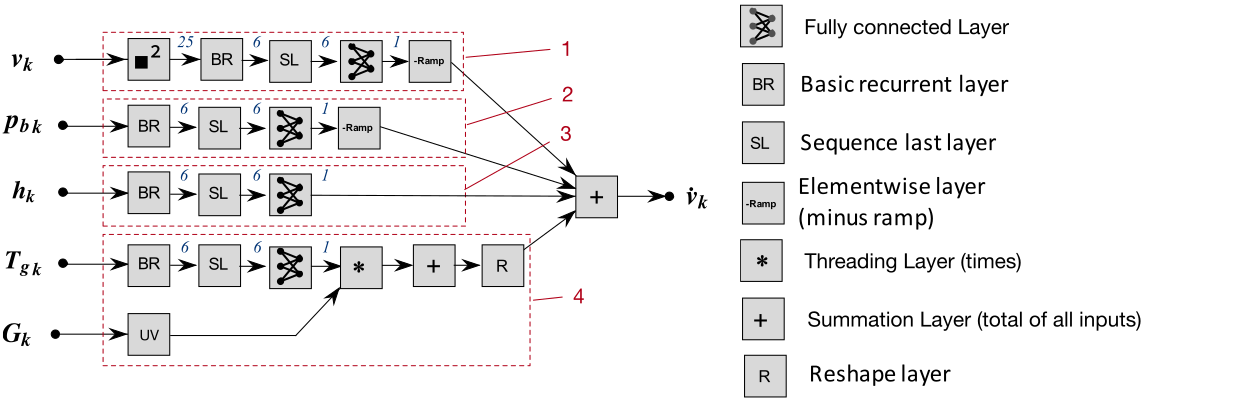

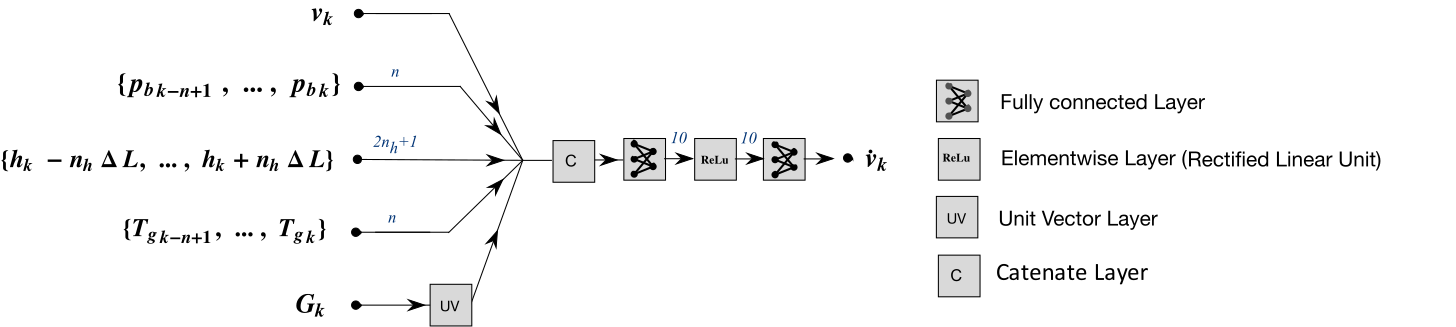

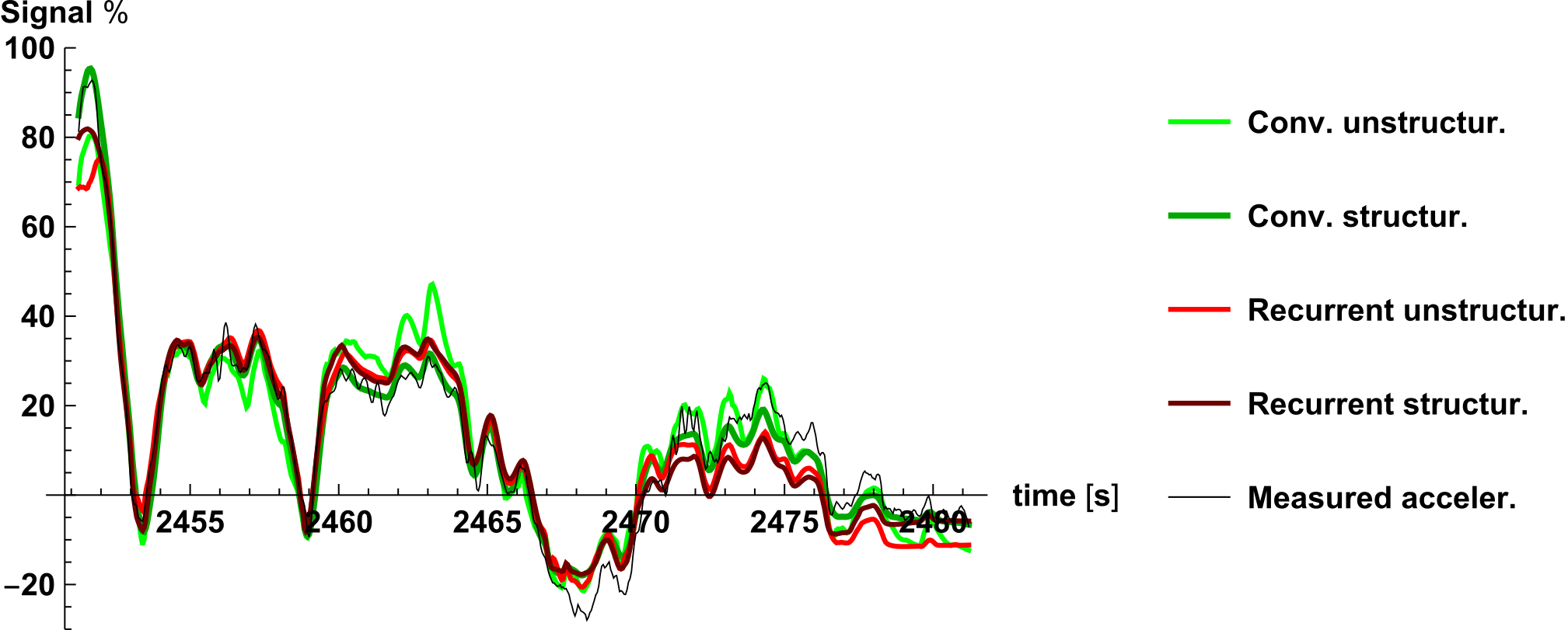

Here are the other structures we analyzed.

The most promising is that convolutional structured network.

This network has a low number of parameters, high data fitting and high interpretability.

The plot shows the value of the accelleration over time for all types of networks.

The dark green line represents the fitting of the structured neural network that is the only network to estimate well the initial peak and the value around zero.

A neural network is a differentiable computational graph and in this framework gradient descent algorithms are applicable to easly tune the parameters.

This approach is flexible, it easy to add information to the model and what I don't know I can learn from data.

If I give a structure to the network that is inspired by the equation of motion, I reduce the number of the parameters and the needed data, therefore the experiments to collect it.

Moreover the network parameters are exaplainable.

But we can do more

It is possible to use neural network also for the control

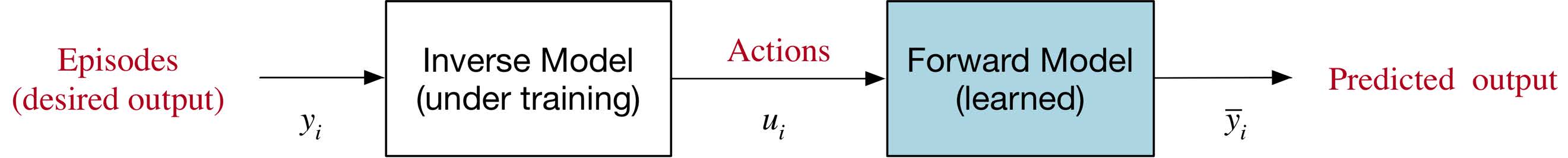

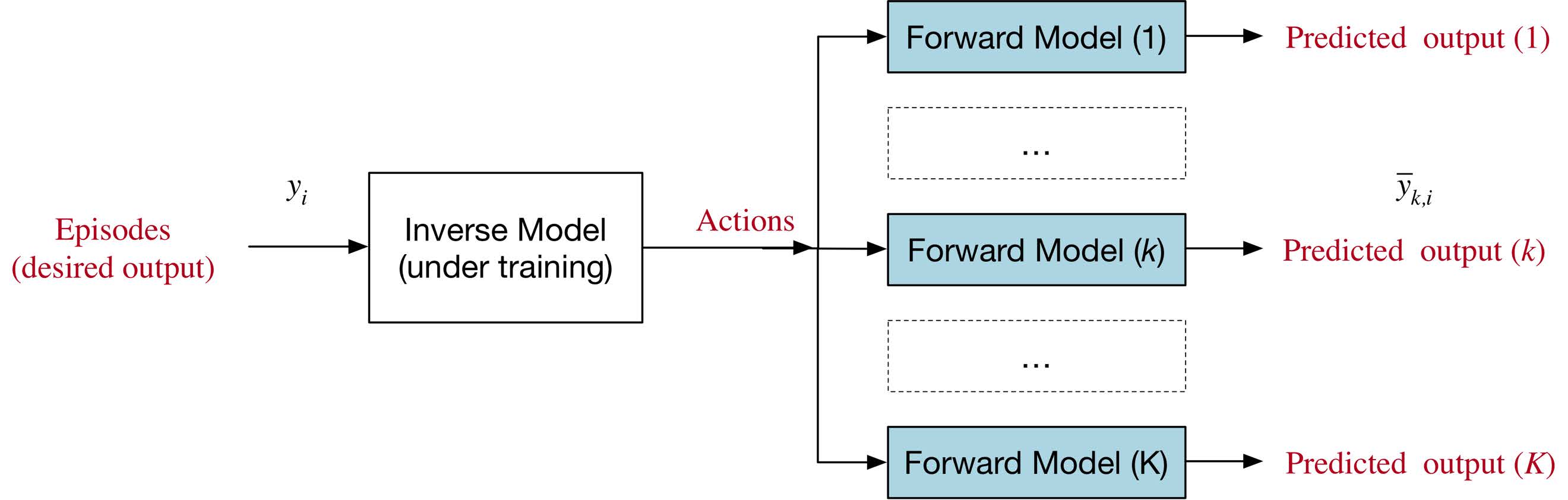

Here is shown how to obtain neural network for control.

An interesting way is using the direct network already trained in series with a neural network that implement an inverse model.

In this way the input-output data of the series of the two networks has to be equal.

So we can expand the dataset by generating episodes at will for the training, reducing the number of experiments to collect them.

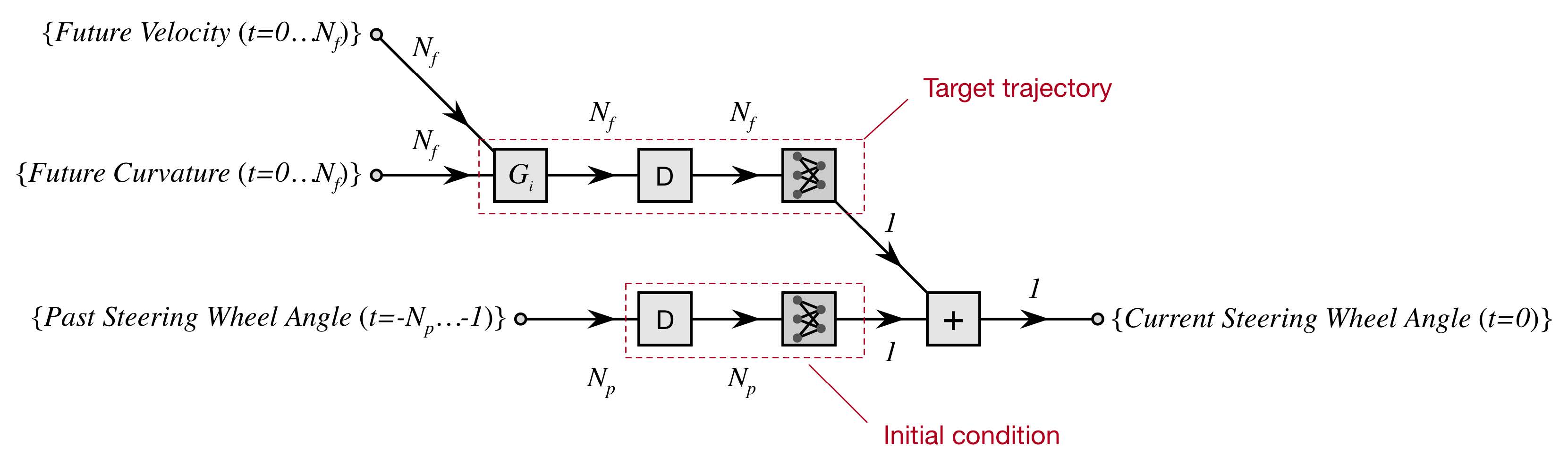

Here is shown an example of neural network for the inverse model of the Jeep Renegade lateral dynamics.

The four videos show how this architecture based on neural network inverse model works on different cars and simulators.

These results show the flexibility of this approach applied to different platforms.

On the left side there is the Jeep Renegade.

On the top the Miacar of DKFI.

On the bottom there are the two simulators: CarMaker and OpenDS.

Let's move on the second topic, deal with uncertain situation.

Think about this situation:

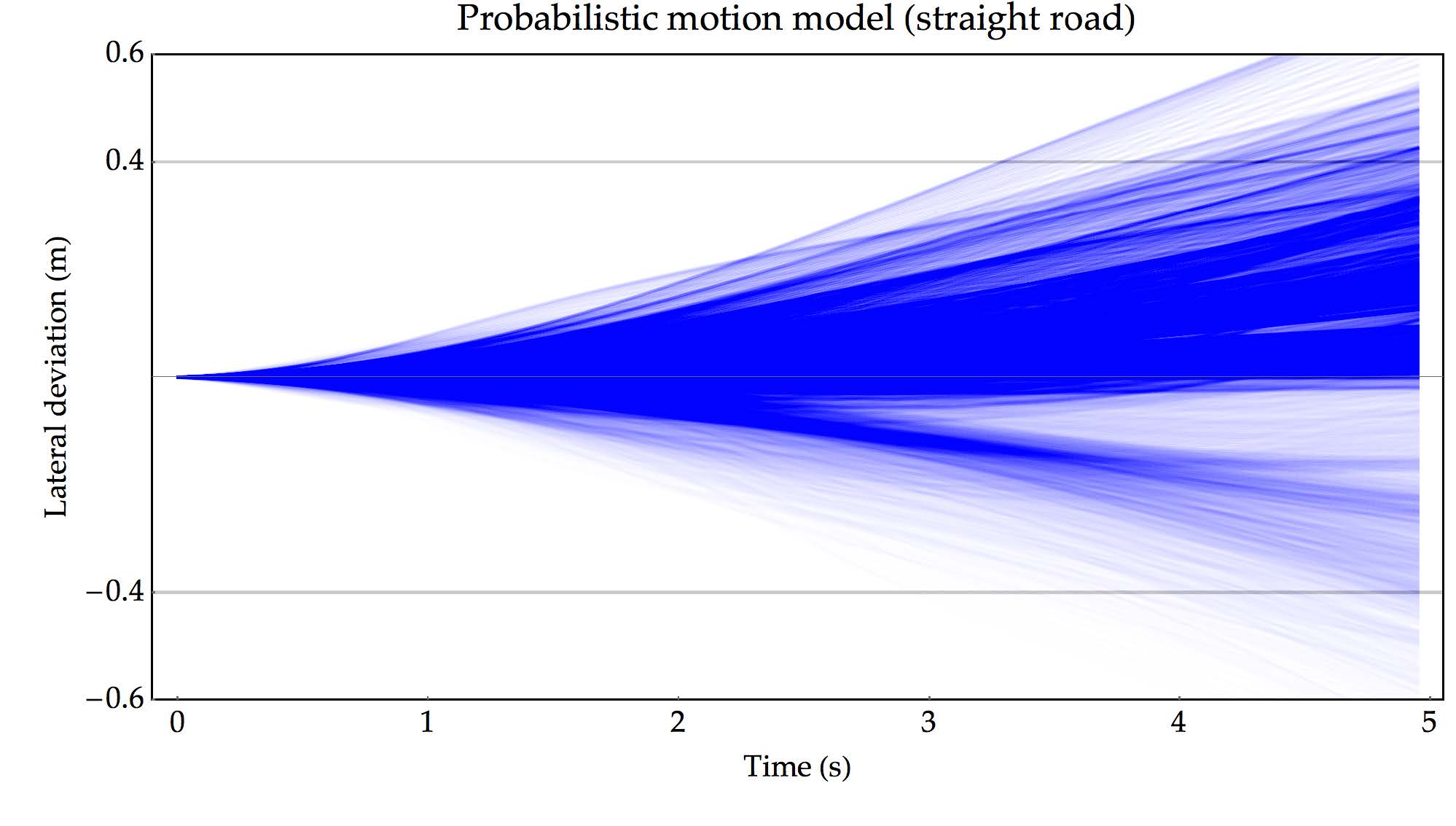

a pedestrian walks on the sidewalk and suddenly may cross the road.

It is pretty difficult to deal with this situation using a deterministic agent.

The agent predicts the behavior of the pedestrian only when he/she starts to cross the road, but it is too late.

The video show our agent before the integration of the neural network for safe speed.

The autonomous agent cannot stop in time due to high velocity.

I decided to deal with this situation using a reinforcement learning framework.

Let's see it in detail.

If this situation become a training scenario for a reinforcement learning framework I can deal with it.

This approach, however, has the problem of a long trainig phase, so in order to reduce it,

- we integrated our autonomous agant developed in dreams4cars with RL framework,

- so we focused on learning only what is fondamental, the safe speed. The neural network is not driving the car but is only gives a suggestion on the safe speed, in a way that, in case the pedestrian crosses the road the autonomous agent can stop the vehicle avoiding the impact.

- We used a simple neural network inside reinforcement learning framework so it can be explainable.

The neural network does not drive the vehicle but suggests to the agent the safe speed by choosing the requested cruising speed.

The network gets in input the longitudinal and lateral position, velocity and curse of the pedestrian, the velocity of the car and the requested cruising speed.

The requested cruising speed is increased decreased or keeped by the network at each instant, as show in the picture.

In order to choose the correct safe speed during the training the network estimats the future reward for each action, at given a state, namely the Q value.

A positive reward is given to RL agent when the car reach without impact the end of the road, otherwise a negative reward.

During the simulation data are collected and the network is trained to a better estimates the action value Q.

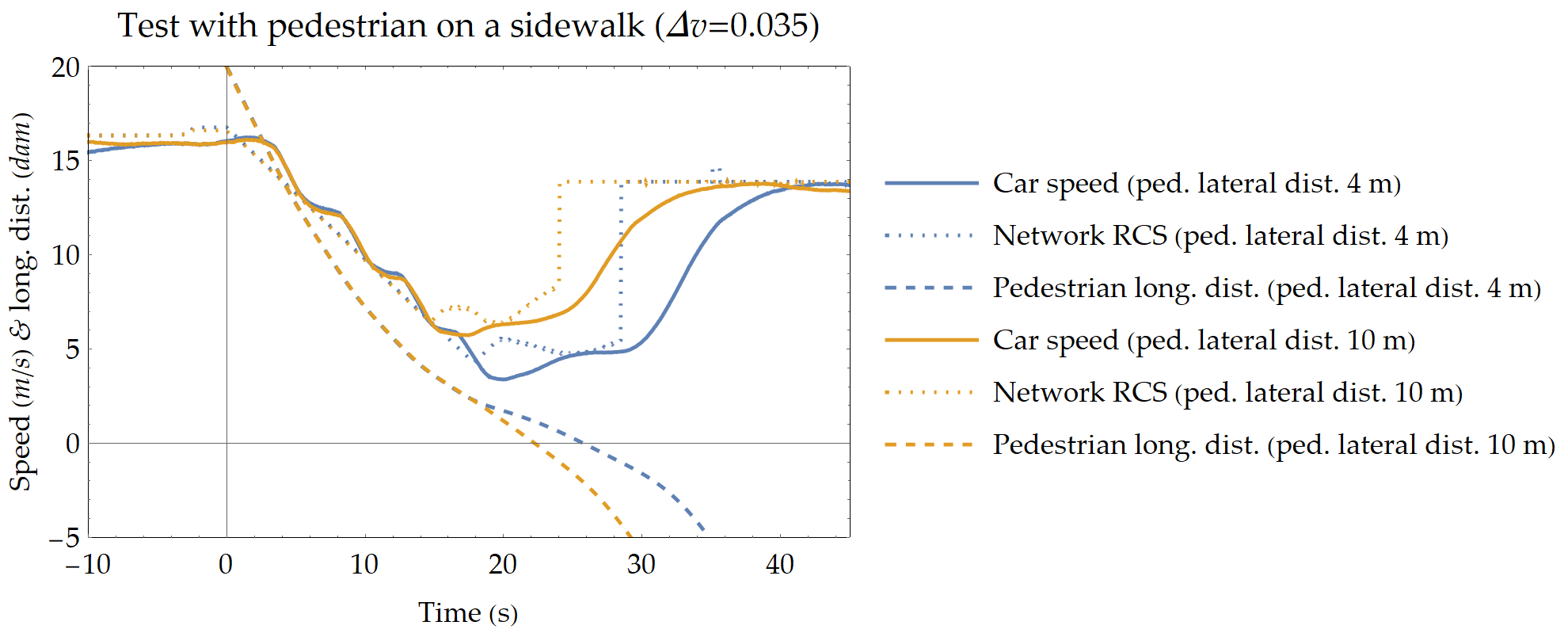

This last slide show the results on the real CRF vehicle.

This result proves that it is possible to transfer what we learned in simulation to a real vehicle.

The network can be applied to a real vehicle because it does not drive the vehicle but only suggests the safe speed.

The blue lines are refered to the situation of 4 m of lateral distance of the pedestrian.

Whereas the orange lines are refered to the situation of 10 m.

The network is more cautious when the pedestrian is closer to the street.

This tecnique is called deep q-learning and it is a temporal diference method, off policy, and model-free.

In our case the Q-learning is applied to a simple network so it can be exaplainable.

The RL is used only to learn what is needed, thanks to the integration with the autonomous agent.

The transfer learning is applicable.

Would also be possible to synthesize, as I did for the Poly-OWC, simple logic from the neural network behavior.

Another field is vehicles, in particular set up a simulator and real vehicles.